-

Warranty 2 year

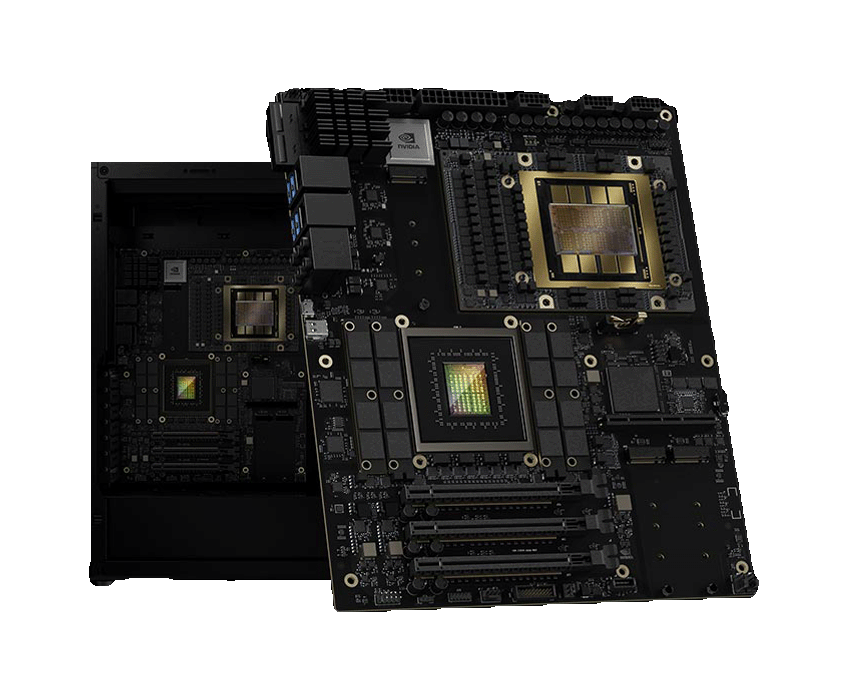

Gigabyte W775-V10-L01 – AI Workstation – NVIDIA GB300 Grace™ Blackwell Ultra Desktop Superchip

SKU:

W775-V10-L01

Features:

- Closed-loop liquid cooling solution

- 1 x NVIDIA Blackwell Ultra GPU

- 1 x NVIDIA Grace-72 Core Neoverse V2 CPU

- 252 GB HBM3E GPU memory with 7.1 TB/s total bandwidth

- 496 GB LPDDR5X CPU memory with up to 396 GB/s bandwidth

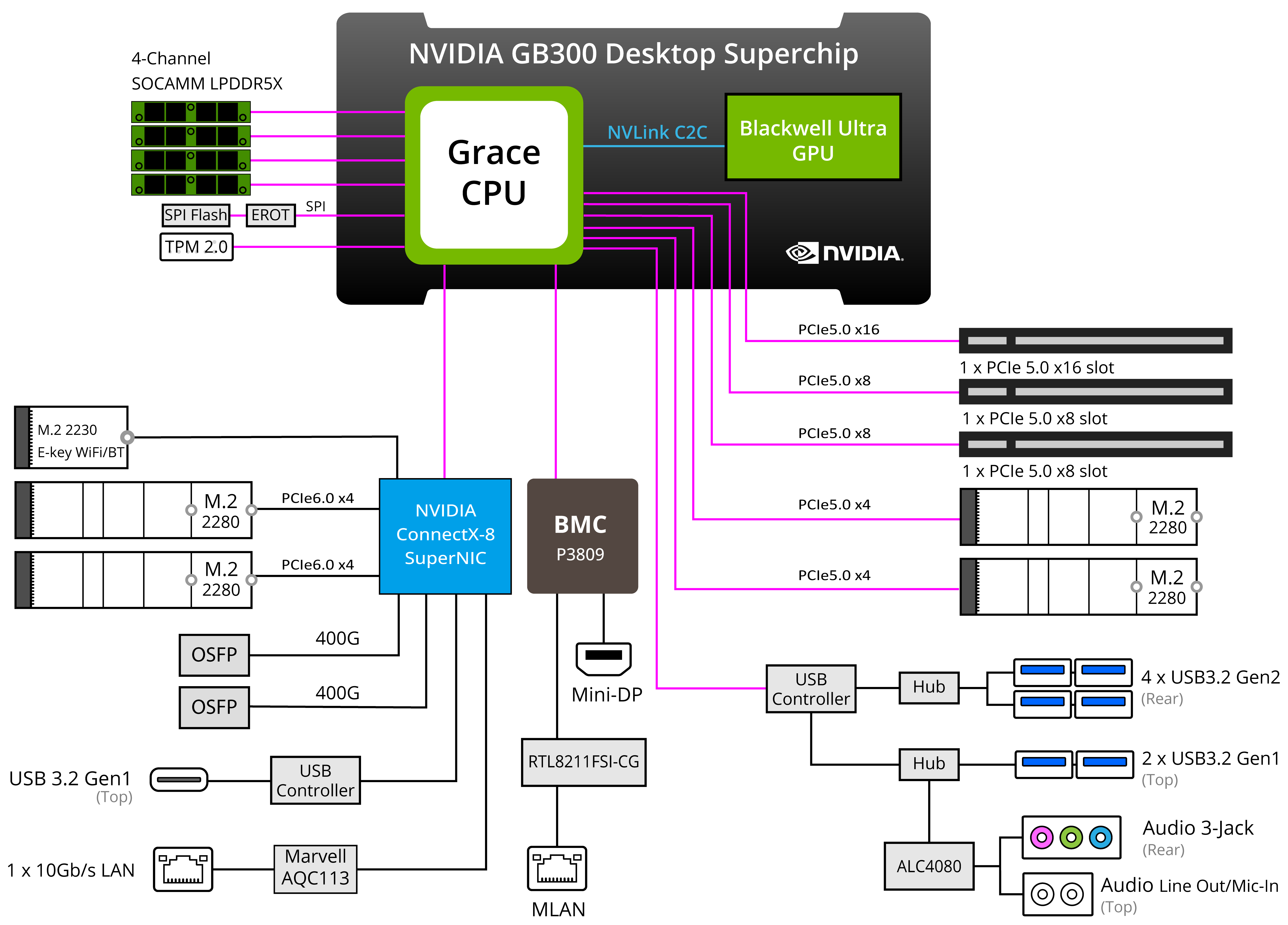

- 2 x 400 Gb/s QSFP ports via NVIDIA ConnectX®-8 SuperNIC™

- 1 x 10 Gb/s LAN port via Marvell AQC113

- 2 x M.2 PCIe Gen6 x4

- 2 x M.2 PCIe Gen5 x4

- 1 x PCIe Gen5 x16 slot

- 2 x PCIe Gen5 x8 slots

- Single 1600W ATX 80 PLUS Platinum power supply

0 ر.س

Description

NVIDIA GB300 Grace™ Blackwell Ultra Desktop Superchip

To meet the demands of large-scale AI models, this platform features the NVIDIA GB300 Grace™ Blackwell Ultra Desktop Superchip and up to a massive 748 GB of coherent memory. This breakthrough architecture delivers an unprecedented amount of compute performance, enabling teams to develop and run large-scale AI training and inferencing workloads directly from the deskside.

Optimized for the NVIDIA AI software stack, cloud-native and AI-native developers, researchers, and data scientists can instantly prototype, fine-tune, and inference large AI models from the desktop and seamlessly deploy to the data center or cloud.

BlackwellArchitecture

Up to 748 GBUnified Memory

20 PFLOPSAI Performance

NVLink-C2CInterconnect

W775-V10-L01 Block Diagram

Technological Breakthroughs

NVIDIA Grace Blackwell Ultra Desktop Superchip

This platform features an NVIDIA Blackwell Ultra GPU, which comes with the latest-generation NVIDIA CUDA® cores and fifth-generation Tensor Cores, connected to a high-performance NVIDIA Grace CPU via the NVIDIA® NVLink®-C2C interconnect, delivering best-in-class system communication and performance.

Fifth-Generation Tensor Cores

The system is powered by the latest NVIDIA Blackwell Generation Tensor Cores, enabling 4-bit floating point (FP4) AI. FP4 increases the performance and size of next-generation models that memory can support while maintaining high accuracy.

NVIDIA ConnectX-8 SuperNIC

The NVIDIA ConnectX®-8 SuperNIC™ is optimized to supercharge hyperscale AI computing workloads. With up to 800 gigabits per second (Gb/s), NVIDIA ConnectX-8 SuperNIC delivers extremely fast, efficient network connectivity, significantly enhancing system performance for AI factories.

NVIDIA DGX OS

This solution implements stable, fully qualified operating systems for running AI, machine learning, and analytics applications. It includes system-specific configurations, drivers, and diagnostic and monitoring tools, allowing for easy scaling across multiple local systems, cloud instances, or other accelerated data center infrastructure.

NVIDIA NVLink-C2C Interconnect

NVIDIA NVLink-C2C extends the industry-leading NVLink technology to a chip-to-chip interconnect between the GPU and CPU, enabling high-bandwidth coherent data transfers between processors and accelerators.

Large Coherent Memory for AI

AI models continue to grow in scale and complexity. Training and running these models within NVIDIA Grace Blackwell Ultra’s large coherent memory allows for massive-scale models to be trained and run efficiently within one memory pool, thanks to the C2C superchip interconnect that bypasses the bottlenecks of traditional CPU and GPU systems.

NVIDIA AI Software Stack

A full stack solution for AI workloads including fine-tuning, inference, and data science. NVIDIA’s AI software stack lets you work local and easily deploy to cloud or data center using the same tools, libraries, frameworks and pretrained models from desktop to cloud.

Workload Optimized Power-Shifting

The system takes advantage of AI-based system optimizations that intelligently shift power based on the currently active workload, continually maximizing performance and efficiency.